In my previous column (Let’s Bid Adieu to Block Devices and SCSI), I documented all the reasons why block device and SCSI technologies are ripe for replacement. The standard that I hope will replace those technologies is Object-Based Storage Devices (OSD), from T10.org. T10 is the same group that brought you SCSI, so if T10 is ready for a change, we should be too.

Of course, I could be totally wrong and OSD could become another standard like HiPPI, the High Performance Parallel Interface (here I’m showing both my age and my HPC roots). HiPPI never became popular in the early 1990s for a variety of reasons and is almost forgotten history. Will OSD become a popular standard or forgotten history?

The biggest issue with the current block-based storage framework, in my opinion, is the lack of communication between the file system and the physical storage, a problem compounded in shared file systems. This manifests itself in many ways, but the major impact is on storage performance and scalability.

Another area that has been a concern for a number of organizations and the storage community is storage security. OSD solves many of the security problems of the current framework. You might want to review Securing Data Across SANs, WANs, and Shared File Systems for some background on some of the current security issues that affect SANs.

Performance and Scalability

With the file-oriented OSD in the data path, information about each object is known at each level, and objects communicate to object managers through the data path — there is a framework to pass information that does not exist using standard SCSI.

If you create a file on a server running an OSD file system, by using standard UNIX open(2) system call or C Library fopen(2) call, that object is managed without any knowledge or intervention from the programmer.

On the host, the object looks and behaves just like files with today’s UNIX and Windows file systems, but under the covers, the way the file system deals with the storage is completely different. One of the biggest differences is the process of allocation within the file system (see Storage Focus: File System Fragmentation for more about file system allocation).

With current file systems, the file system understands what space has been allocated and what space has not been allocated by looking at a map and allocating the actual data space from within that map. The representation of the allocation map can vary between file systems.

With OSD, allocation works completely differently. The file system queries the object manager or managers associated with that file system. Those managers could reside on different disk drives or different object manager controllers (what we now call RAID controllers), or a combination of both. The object managers provide the file system with information on how much space is available. The file system keeps track of the total space available, but not the specific allocations and location of any of the space for a file.

Page 2: RAID Could Become ‘A Thing of the Past’

Continued From Page 1

RAID Could Become ‘A Thing of the Past’

When you create a file under OSD, given the allocation policy that is allowed within the OSD file system, a certain amount of space is allocated. The file system passes the request to the object managers, and the object managers allocate and manage the available space. Each object manager could have its own policies for allocation. RAID as we know it would likely become a thing of the past.

What happens if you have a file system with a number of small files and a number of larger files within the same file system? In today’s fixed block world, RAID-1 (mirroring) would likely be better for performance and access time for the small files, and RAID-5 (parity) would likely be better for performance, access time and space efficiency for the large files. The space efficiency is an important aspect of RAID-5 compared to RAID-1, since you have more drives being used for actual data space. For example, with RAID-5 4+1, you have 1 parity drive for every four data drives. Using RAID-1, you would have four mirrors for every data drive.

With RAID as we know it today, you cannot have both types of RAID dynamically allocated for a single file system. Some RAID vendors have attempted this, but to have successful dynamic RAID allocation, the file system and the RAID must be able to communicate, which is not possible with today’s block-oriented storage. Today, a single RAID using both RAID-1 and RAID-5 cannot operate efficiently since the file system does not communicate with the storage.

However, with OSD, the object manager can be tuned to do the equivalent of RAID-1 for small files and RAID-5 for larger files, and dynamically allocate objects. All of the problems with space efficiency and performance are taken care of since the file system passes the file allocation information to the object managers.

If a file is opened to extend the end of a file that was initially small, the object manager could change the place the file was allocated and the policy of that file to allow it to be changed from the equivalent of RAID-1 to RAID-5, if that is the policy specified by the object manager. The ability to dynamically change the allocation of the file and the number of disks used for the file allows performance to change with file sizes. This is transparent to the file system, since all it passes is allocation policy for the file and data.

You could theoretically change what we now know as RAID level by just the size of the file. OSD would theoretically allow, if the user had the correct permissions, allocation of files across a specific number of disk drives to match bandwidth.

Security

The current SCSI and POSIX standards don’t provide nearly the same level of security that you can find with networking technology. To some degree, the reason is obvious, since storage was generally local and thus not an issue. That, of course, is changing rapidly.

As shared file systems become more widely used, the data could be local, or it could be remote, and you do not know if it is directly attached locally, directly attached over dark fiber with Fibre Channel, or possibly running from SAN to WAN.

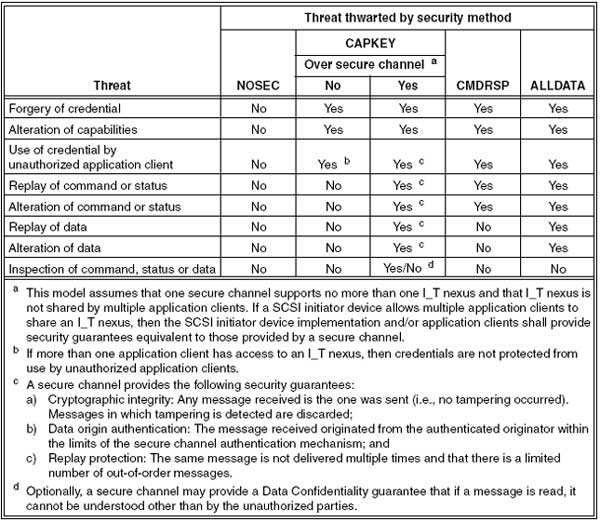

OSD does not offer security nirvana, but the framework offers far more security than we currently have. The following is taken directly from the OSD standard document:

|

| OSD Security Methods |

As you can see, the standard provides far greater security than standard file systems with even with ACLS (Access Control Lists), because with a file system, someone can still get to the data by reading the raw device, which is not very secure. Add to that the complexity of shared file systems and security issues between operating systems, and OSD provides a basic framework for security that could be implemented across operating system boundaries.

To me, the big feature OSD provides is not host security, but the security and authentication of every OSD device. That means that HBAs, RAID controllers and disks all must be OSD-compliant and authenticated. You cannot just pull a disk out and plug it in somewhere else, and if the data on the disk is encrypted, it provides far more security than we have today. If this encryption technology was applied to tapes, you could ship sensitive data via standard mail with no concerns for data security (damage in shipping is another matter, however).

Page 3: Now All We Need Are OSD Products

Continued From Page 2

Now All We Need Are OSD Products

Of course, someone still has to build an OSD system with a file system, HBAs, switches, storage managers, disk and tape.

The standard must still be implemented end-to-end, which means that file system vendors have to talk OSD, OSD storage manager vendors (RAID controller vendors) have to talk OSD, and most importantly, disk drive vendors like Seagate and Hitachi have to build OSD disks. Without the disk drive vendors building disks, current RAID storage vendors cannot build object storage managers, and without object storage managers, file system vendors cannot create object-based file systems.

A number of companies have developed OSD-based products without the disk drives being completed. Panasas, for one, has developed an OSD hardware platform. This is just one of many hardware and software products that are starting to appear on the market. Sounds similar to how RAID products evolved, and then Fibre Channel products, which is not surprising, since before standards are finalized and completed, many vendors develop products to develop (and corner) the market.

OSD’s future is in question, however. The factors threatening the standard are lack of availability of disk support, and lack of a complete end-to-end understanding of what OSD provides.

By a wide margin, the second factor is the biggest risk. Management at organizations need to understand what OSD can provide on both the performance and security fronts, but that does not come for free. If all they are concerned about is cost of storage, which for many is the current mode of operation, then we are in big trouble. We all need to look beyond the current cost per MB, GB, TB — and soon PB — and start looking at storage as an integrated service, not as a commodity device. For without this change, we cannot scale, and we will not be secure. This depends on both vendors and industry educating customers and it will not happen overnight. We all need to help and become more open-minded in this area, and we need products to move from R&D to reality.