In the last several years, there have been an increasing number of storage options. Initially we had just magnetic hard drives with a single rotational speed. Then they started to come in several varieties. Now we have a range of drive speeds starting at 15,000 rpm at the top end, followed by 10,000 rpm drives, then the ubiquitous 7,200 drives, and slower drives with speeds such as 5,900, 5,400, 4,500 and even variable speed drives.

The rotational speed of the disk drive is strong indicator of performance, price, capacity and power usage. Typically the higher the speed, the more expensive the drive. And usually high-speed drive has a smaller capacity, better performance and higher power consumption. As the drive speed comes down, the drive price decreases, the capacity increases, the performance decreases, and the power usage decreases.

There are other sources of drive variation, for example, drive cache size and physical drive size (2.5″ and 3.5″). There is also the drive communication protocol such as SATA, SAS or Fiber Channel. There are also protocol speed differences such as 6 Gigabits per second (Gbps), 3 Gbps and slower (although these are older drives).

Now there is a new type of drive that is coming out. These drives are referred to as Shingled Magnetic Recording (SMR) drives.

How SMR Drives Operate

A conventional hard drive has two heads. One of them is for writing, and one is for reading. The write head is larger than the read head, which means that the tracks on a hard drive platter have to be wider than necessary from a read perspective. In addition, there is guard space between tracks so that the write head doesn’t disturb the data on neighboring tracks. This layout is illustrated below in Figure 1.

Figure 1: Conventional hard drive track layout

What SMR drives do is to reduce the guard space between tracks and overlap the tracks. Figure 2 below is a diagram that illustrates this configuration.

Figure 2: SMR hard drive track layout

The tracks are overlapped so they look like roof shingles, hence the name “Shingled Magnetic Recording.” The diagram shows that the write head writes the data across the entire track (the blue and green stripes in a specific track). But the drive “trims” the data to the width the read head actually needs to read the data (this is the blue area of each track). This allows the areal density, the number of bits in a specific unit area, to be increased, giving us more drive capacity.

Let’s explore what happens when the drive reads and writes data to the shingled tracks. Figure 3 below shows what happens when the data is starting to be written to the drive.

Figure 3: SMR hard drive track layout

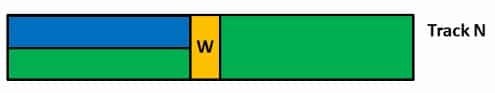

The tracks are overlapped and are in green. The write drive head writes the data to the full track. Notice that the data is written both to Track N and Track N+1. Then the drive “trims” the data behind the write head to the width of the read head. The trimmed data is the blue.

The drive head continues writing to the track leaving behind the trimmed data as shown in the Figure 4.

Figure 4: SMR hard drive track layout

Only a single track is shown so that you can see how the write head writes to the entire width of the track but is trimmed to the width of the read head (the blue section of the track).

If you look at how the data is written to the tracks, you might notice an issue. The write head writes to the current track, such as Track N, but it also writes to the overlapping track, Track N+1, because of the width of the write head. What if there is data on Track N+1 where the write head needs to write data. This is shown in Figure 5 below.

Figure 5: SMR hard drive track layout

The write head is in yellow and it needs to write some data. The post-trimmed data is show as “New Data,” and the data it will over-write is shown as “Disturbed Data” and is in red. This data already exists and will be overwritten if the “New Data” is written.

To get around the problem of data over-write, the drive has to read the data that is about to be overwritten and write it somewhere else on the drive. After that, it can write the new data in the original location. Therefore, a simple write becomes a read-write-write (one read and two writes). I’m not counting the “seeks” that might have to be performed, but you can see how a simple data update can lead to a large amount of activity.

For sequential writes, SMR drives are great because it’s just a pure write operation (no reading and no seeking). However, as soon as an application wants to overwrite a piece of data or perform a data update, the write suddenly becomes a read-write-write operation.

In an additional scenario, if a block is erased you suddenly have a “hole” in the drive that a file system will likely use at some point. There may be data on neighboring tracks surrounding the hole. A typical file system doesn’t know that an unused block may or may not have neighboring tracks with data so it may be decide to write into that block. Therefore the disk will experience a read-write-write situation again.

IO elevators within the kernel try to combine different writes to make fewer sequential writes rather than a large number of smaller writes that are possibly random. This can help SMR drives, but only to a certain degree. To make writing efficient, the elevator needs to store a lot of data and then look for sequential combinations. This results in increased memory usage, increased CPU usage and increased write latency. The result is that the write speed can be degraded for both sequential write IO and random write IO, depending upon where the data is to be written.

Therefore many times you will see SMR drives referred to as “archive drives.” That is, once the data is written, it is read much more than it is written, which sounds more like archive storage. However, the appeal of vastly increased drive capacity has many people working on ways overcome the write issues of SMR drives.

Improving the Situation

How to “work around” the idiosyncrasies of SMR drives has been a field of study for several years. There have been many approaches to “fixing” the overwrite issue in SMR drives, but I’m only going to cover a few of them in this article starting with drive banding.

Drive banding

As data is written to the SMR drive, the probability of having to rewrite neighboring data increases. In the pathological case, a simple rewrite may cause all of the data on the drive to be read and written again. To help avoid this, SMR drive manufacturers have created “bands.” Inside a band there are a fixed number of shingled tracks. Separating bands is a large “guard space” so that the data on an outside track of one band won’t over write data on a track in a neighboring band. This increased guard space between bands contains all overwrites due to data updates to the particular band. If small amounts of data need to be re-written, the possible cascade of read-write-write cycles to move data to prevent it from being over-written is contained to the band.

Using bands has a small impact on the overall areal drive density but not much. The bands have a reasonable number of tracks relative to the number of larger guard spaces so that the overall areal density impact is minimal. The trade-off is between areal density (fewer guard spaces with very large number of SMR tracks in a band) and performance where there are more guard spaces and fewer tracks per band, limiting read-write-write cycles. But so far the verdict is that drive SMR drive manufacturers are using bands in the newer SMR drives.

Over-Provisioning

You may have noticed that SMR drives are somewhat similar to SSDs. When you have to update a small amount of storage on a SSD, you have to read the entire block, update the data, and then write the block back to the SSD. SMR drives are very similar. If you want to update a small bit of data that is surrounded by existing data, you may have to read the other data and then write the data back to the drive. This is also referred to as write amplification. Therefore researchers and engineers have been trying to adapt SSD concepts to SMR drives.

One of the first techniques adapted from SSDs to SMR drives is over-provisioning. Over-provisioning is the technique of reserving space on the drive that is not accessible by the user for use by the drive for storing data. The drive can use this space for anything it wants or needs. In the case of SMR drives this space can be used to “park” data for blocks that are to be overwritten because of a write-update to an existing block. Once the data has been updated, the drive can copy the data from the “parking area” to the final location. This can also be used in an attempt to lay out files in a more sequential manner to reduce the fragmentation of the SMR drive.

Over-provisioning is usually expressed as a percentage with the following definition:

Percentage Over-provisioning = (Physical Capacity – User Capacity) / (User Capacity)

As you can tell from the equation and what you might already intuitively know, over-provisioning takes away user addressable capacity from the drive. For SSDs the trade-off between losing some capacity and the boost in performance is usually a good one. For SMR drives, the trade-off may not be as clear cut, but that is yet to be determined.

File System Integration

One option for improving the performance from SMR drives is to use a file system more appropriate to the sequential nature of SMR drives. Many people have discussed using log-based file systems on SMR drives to improve performance.

Log-Structured File Systems are a bit different than other file systems with both good points and bad points. Rather than write to a tree structure such as a b-tree or an h-tree, either with or without a journal, a log-structured file system writes all data and metadata sequentially in a continuous stream that is called a log (actually it is a circular log).

The concept of a log-structured file system was developed by John Ousterhout and Fred Douglis. The motivation behind log-structured file systems is that typical file systems lay out data based on spatial locality for rotating media (hard drives). But rotating media tends to have slow seek times limiting write performance. In addition, it was presumed that most IO would become write-dominated. A log-structured file system takes a new approach and treats the file system as a circular log and writes sequentially to the “head” of the log (the beginning), never overwriting the existing log. This means that seeks are kept to a minimum because everything is sequential, improving write performance. Log-structured file systems seem like a good option for SSDs or SMR drives because of their emphasis on sequential writes rather than random writes. But they have other advantages as well. I haven’t seen a comparison of a log-structured file system to a tree-based one on SMR drives, but the general thought is that they are much better suited for SMR drives than tree based file systems.

There are a couple of notable log-structured file systems in Linux. One is NILFS. It was included in the 2.6.30 kernel and has been shown to have great performance on SSDs. It is also designed for large file systems (exabytes) so it’s suitable for SMR drives.

Another example is F2FS which is a log-structured file system designed for NAND-based storage devices, particularly embedded devices such as cell phones and tablets. It currently is limited to 16TB file systems (3.94 TB for an individual file); however, it has been shown to be quite fast for some benchmarks.

We can embellish on the idea of appropriate SMR file systems using a combination of drive layout modifications and a file system that understands the drive layout. This concept comes from the supposition that metadata is potentially changed more often than the data itself. For the best performance, the drive would need the ability to be able to do small writes very quickly (write IOPs) for the metadata. SMR drives can’t really do this very well, but classic magnetic drives can do this much better than SMR drives. What if we could combine SMR tracks and “regular” drive tracks within the same drive and get the file system to write the metadata to one track type and the data to another track type? This is exactly what has been proposed.

In this new type of drive there are two track types. One track type would retain the current guard space between tracks eliminating the over-write issue of SMR drives, and the other track type would consist of SMR bands. The first track type is sometimes referred to as a “Random Access Zone” (RAZ) and stores the metadata for the file system. The second track type, which uses SMR tracks, is used to store the data itself. Putting file metadata on the RAZ tracks allows it can be easily updated without a read-write-write scenario associated with SMR tracks. The file data is put on the SMR tracks in as close to a sequential manner as possible to reduce any data over-write that might need to take place.

However, mixing track types in a single drive reduces the areal density of the drive, and improving the areal density is the strength of SMR drives. In a study by Garth Gibson and his students from Carnegie Mellon University, they showed what happens to the areal density when SMR tracks alone are used and when mixed RAZ/SMR tracks are used. They computed the increase in areal density relative to a non-SMR drive using a simplified model.

When only SMR tracks are used, the theoretical increase in areal density was 2.25. When 1 percent of the tracks are devoted to RAZ, the areal density is barely affected, particularly when the number of tracks per band is large. Even 10 percent of the tracks being devoted to RAZ only slightly impacts the overall areal density. For large number of tracks per band, the impact on areal density reduces from 2.25 to about 2.1.

For track type combinations to be effective, the file system has to be aware of where the RAZ tracks and the SMR tracks are located on the drive. Or conversely, the file system has to tell the drive which part of the data is metadata and which part is just data, and the drive uses the appropriate track type. Either approach will require both file system work and work on interfacing with the drive. One area this can be used very effectively is for object-based storage since this naturally separates metadata and data.

Renumber Tracks

A recent research paper suggested that a novel renumbering of tracks of a different track usage pattern could improve SMR performance until a large percentage of the tracks are used. Conventionally, a drive will use the tracks in increasing order. For example let’s say a drive has 4 tracks. Track 1 will be first used, then tracks 2, 3 and 4. For an SMR drive you could potentially get a significant number of read-write-write sequences if data is written in this manner. But if you write the tracks in the order 4, 1, 2, 3 then you can write up to 50% of the number of tracks before the performance decreases (read-write-write operations start happening instead of a simple write).

Changing the order of tracks for writing fits well with SMR drives. Since typical SMR drives typically use bands, the order in which the bands are written can be controlled. For example, if the ordering is done in a round-robin fashion, you avoid writing to one band more than the others. In the case of the 4123 track pattern where you write in a round-robin fashion, all of the 4 tracks in the bands are written to before you move on to the first tracks.

The paper examined four re-ordering schemes of which two were very good in terms of performance relative to a conventional hard drive. The two schemes show a great deal of promise and only require a little change in drive firmware. I hope this gets implemented in production drives in the near future.

IO Patterns

All of these “fixes” or “adaptations” to SMR drives can have an impact on performance, areal density (the point of SMR), and overall capacity. But ultimately, the real impact depends upon the specific IO pattern hitting the drive. Do your application(s) do a great deal of “in-place” data updates? Do they do a great deal of IOPS?

SMR Drive Examples

SMR drives are popping out all the time. Recently, Western Digital announced a 10TB drive with a 12Gb SAS interface that uses SMR. The drive uses 7 platters or about 1.43 TB per platter. It has a 128MB cache, a five year warranty, and a two million hour meantime between failures (MTBF) rating.

Seagate also announced a 8TB SMR drive. It too has a 128MB cache and spins at 5,900 RPM. It has an average read/write throughput of 150MB/sec (190MB/sec max), comes with a three-year warranty and a MTBF of 800,000 hours. The price on this drive is targeted at $260 or just a bit over 3 cents/GB. On Amazon you can order a Seagate SMR drive that has 6TB of capacity, a 128MB cache, and a 3 year warranty for $281.00.

All of these drives are being labeled as archive drives because of the use of SMR tracks. But given the price, the areal density and the insatiable user desire for storage space, drive manufacturers are likely to develop technology to improve the performance of SMR drives.

Summary

In the never-ending quest for increased storage capacity, drive manufacturers have created a new type of hard drive called a Shingled Magnetic Recording drive (SMR). SMR drives use tracks that overlap one another like shingles on a roof. The drives write the data to multiple tracks at a time, and the data is then trimmed to be the width of the drive read head. This reduces the size of the track storing the data improving the areal density of the drive. However it also introduces the issue of updating data in place or writing data that has neighboring data that is not to be disturbed.

Updating data in place means writing or overwriting the data that is already on a track. Since the tracks are so close together this means that the neighboring tracks will also be overwritten. If there is any data there, the drives needs to first read it, put it somewhere else on the drive, and then update the data. This means that a simple write becomes a read-write-write operation resulting in lower throughput and higher latency.

This article presents some possible solutions to the read-write-write issue that people have discussed. Some of these focus on the drive itself and some focus on the file system and others focus on a combination. One of the best and simplest options appears to be a simple re-ordering of how the tracks are used.

Whether the read-write-write problem affects you and your applications really depends upon the IO pattern. I’ve talked about the importance of knowing your IO patterns before, but they become even more critical when using SMR drives.

Right now SMR drives are very good for data that is read much more than it is written such as in an archive because of the data overwrite issue. But the ideas mentioned here show great promise in restoring the SMR drive performance allowing them to be used in a great variety of situations.

Photo courtesy of Shutterstock.