If you Google “software-defined storage” (SDS) you’ll come across some good informative articles. You’ll also come across equally informative articles that complain about the term, charging it with being more a marketing gimmick than a technical term.

Software-defined storage is certainly a broad term. Various storage vendors define SDS quite differently for different segments of the market.

Let’s start with the foundational definition of SDS and see how vendor products fit.

SDS Definition

In general (very general), SDS decouples the underlying storage hardware from the data services layer and automates storage management functions across multiple devices. Individual arrays may have more advanced data services and management functionality. SDS increases overall storage infrastructure value by running on commodity storage or adding centralized data services onto existing ones.

There is no industry-wide SDS standard but the Storage Networking Industry Association (SNIA) has taken a good stab at a definition. SNIA defines SDS as “virtualized storage with a service management interface.” The interface pools storage and presents it as storage tiers to applications using appropriate protocols and delivers data services across the virtual infrastructure regardless of the underlying hardware’s capabilities (or the lack thereof). According to SNIA, SDS should also include the following:

- Automate it. Since one of SDS’s big selling points is simplified management, automated storage maintenance is a given. Ideally, simplification extends to the administrative interface, so admins can easily build policies and monitor them.

- Give it standard interfaces. This is a rock-bottom requirement for SNIA’s SDS definition: centrally managing, provisioning and protecting across multiple storage devices. Not every SDS product will support every type of protocol or data type, but most support file and block, and many support object as well.

- Scale it. One of the foundational reasons for the existence of SDS is easy scalability. Hot node or drive replacement is critical to scaling the background storage capacity without disrupting availability or performance. Provisioning storage pools for the new hardware should be dynamic and automated.

- Make storage consumption transparent. Automation is a central SDS capability, but a service portal should also let admins monitor storage consumption against resources and budget.

- Flexibly deliver it. Storage defined software may execute from a variety of sources and still be SDS. Examples include physical and/or virtual appliances, storage systems built on commodity servers and internal disks, external hosts, and SDS hypervisors that provide advanced data services to all-flash arrays.

What’s the Difference Between SDS and Storage Virtualization?

Some people lump SDS and storage virtualization together. SDS is built on storage virtualization, and the two domains have a lot in common.

But they are not the same thing — not yet anyway.

As product development continues, they may well merge into a single hypervisor-controlled storage virtualization and automation platform. For right now, here’s the difference:

- Storage virtualization is all about abstracting storage. The virtualization layer provides servers with a logical view of underlying storage resources. The hypervisor combines storage into logical pools and centralizes policy-driven storage management and as-needed provisioning.

- Software-defined storage enables storage pools too, but it is more about abstracting storage management and data protection capabilities — usually replication, dedupe, and snapshots — from underlying storage to the SDS hypervisor. Centralized automation and management operates across distributed storage devices and provides centralized data services to multiple storage devices.

SDS Market Drivers

The explosive growth of data ultimately drives the move to software-defined storage. Storage must be highly scalable to ingest massive data without sacrificing performance. It also must rapidly provision and pool new storage resources across storage devices — and it needs to do this cost-effectively.

This architecture is particularly useful for unstructured data, which can be difficult to manage effectively in a traditional storage environment. This includes text files, emails, machine data, audio and video, log files, social data, sensor data, and much, much more. An SDS environment allows mixed protocols and data types to exist in a virtualized and centrally managed storage infrastructure.

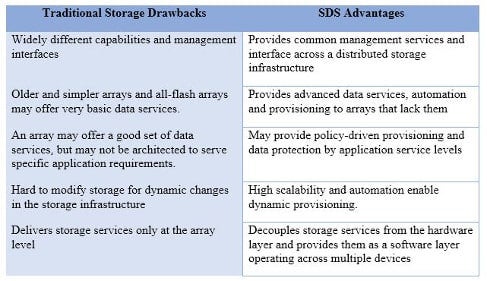

Let’s look at the drawbacks to traditional storage infrastructure and see how SDS can address them.

Vendors

Several storage and storage management vendors call their offerings software-defined storage. But the label isn’t always accurate. For example, a clustered file system is by no means automatically SDS, even though an SDS solution will provide services to an infrastructure containing filers.

We’ll stick with the definition of SDS as a method of decoupling storage hardware from data and providing a management service layer for provisioning, management and data services across a distributed infrastructure.

Dell EMC offers SDS via EMC ViPR, a software-only virtual appliance that runs on VMware ESX servers. The controller automates storage across EMC and third-party vendor storage by abstracting and pooling resources via a self-service catalog. Dell also markets SDS via their partnership with open-source Nexenta software. Nexenta virtualizes servers, storage and networks to create a software-defined data center running on Dell hardware. I would define this more as a hyperconverged environment, but it does have SDS features.

HPE StoreVirtual offers software-defined storage and a central management console for virtual environments without external shared storage for the VMs. StoreVirtual can run on multiple servers to create redundant clustered storage pools using internal disk or external storage.

IBM Spectrum Storage is a large family of storage management products for the flexible enterprise and web-scale business. It includes SDS features for highly scalable block and file storage with intelligent data services including data protection and archiving. Individual products are delivered as software, IBM appliances or cloud services. Spectrum Storage supports both IBM and third-party storage platforms.

NetApp’s offering may not strictly be SDS as it lacks a common management interface. However, the company has invested in placing SDS technology in Data ONTAP OS, OnCommand, FlexArray virtualization and the FAS enterprise storage series. Its SDS characteristics virtualize storage services and service levels, as well as offering APIs for automating workflow and customizing applications.

VMware is an SDS pioneer thanks to its software-defined data center (SDDC) concept. VMware abstracts data and data services from physical storage and creates virtual data stores for VMs. Adding VVols lets admins assign individual policy-driven configurations to different virtual stores.

FalconStor FreeStor SDS delivers data services across multiple storage infrastructures. The underlying engine uses Intelligent Abstraction to decouple applications, data and workloads from physical storage and fabric.

Lower Costs with SDS

I wrote earlier that massive data growth was the driver for SDS. The hoped-for benefit is to lower the cost of storing and managing massive data, which is the whole idea behind the SDS business case. It’s no accident that just as server virtualization solved a lot of tough problems by consolidating servers, so SDS can actively address big storage issues by combining storage devices into a highly protected and automated whole.

Photo courtesy of Shutterstock.