Scale up and scale out are two different approaches to increasing the capacity of a system. Learn the differences between the two and how they can be used.

Enterprises running their IT workloads on a single server that’s struggling to keep pace with growing data storage and processing demands have a choice: scale up vertically with more powerful processors and increased storage capacity, or scale out horizontally by distributing workloads onto new servers that can meet their performance demands. Which is better, scale up vs. scale out? The short answer is that it depends—each path offers benefits and limitations based on the specific needs of the organization.

This article looks at both approaches to help you decide which is better suited for your particular requirements.

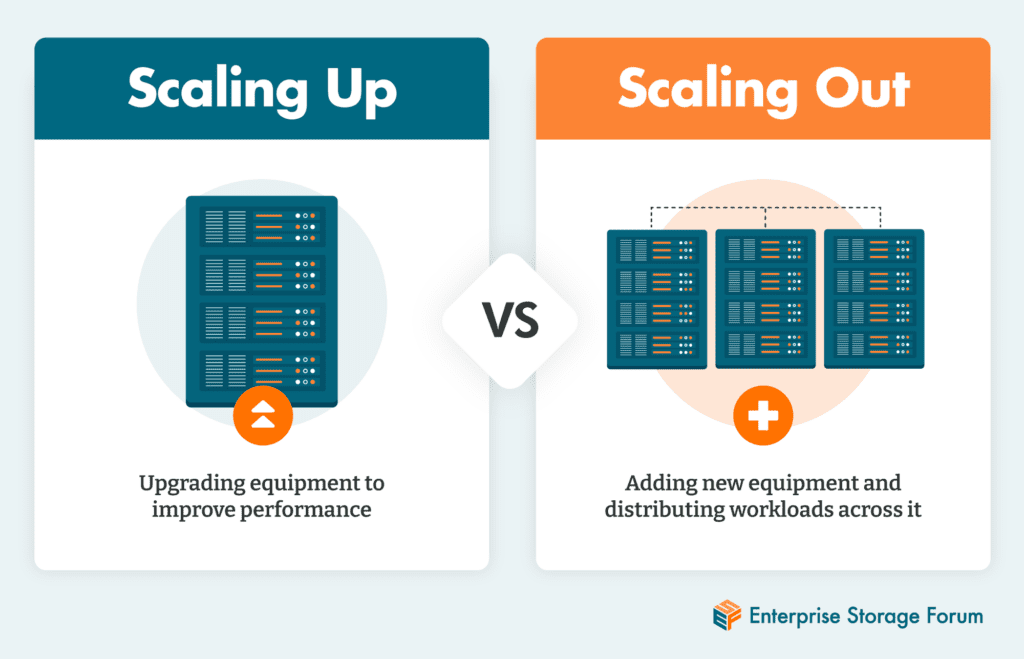

Put simply, scaling up is upgrading an existing server for more capacity and better performance, while scaling out is adding servers and distributing the workload across them to improve processing capabilities. The two approaches are different solutions to the same problem: how to increase memory and processing speeds to handle all the data needs of modern businesses.

Enterprise data use is changing to incorporate emerging technologies like artificial intelligence, IoT, and analytics that require new processing and storage capabilities. Collectively, these changes are compelling IT departments to develop strategies for expansion, and many of them will need to decide whether to scale up, or scale out.

These approaches apply to a wide range of technologies, from individual computers and network attached storage (NAS) to servers running powerful applications and databases.

Scaling up is the process of incrementing or replacing a server’s existing processor with more powerful capabilities that can process workloads faster. In essence, scaling up is expanding processing capability vertically within the footprint of a single server. This “scale up” of a server can be accomplished in two ways:

Scaling up simply means upgrading the processing components of a computer. In this context, it refers specifically to a server, but there are other examples.

A home computer that gets a new operating system version might need more random access memory (RAM) or a bigger, faster solid state drive (SSD)—both upgrades would be ways of scaling up that machine. Or a database provider might introduce a new version that offers customers new features, but it also adds processing requirements. The company could upgrade its servers, or scale up, to meet the need.

The advantage of using a scale-up approach is that the processing upgrade is simplified, as there’s only a single server to worry about. All that’s needed is to enhance or replace processing and memory so the machine runs faster. There is no need to reconfigure software or to worry about integration or potential latency caused by linking the server with other servers and sharing workloads.

The disadvantage of a scale-up approach is a lack of redundancy—if work depends upon a single server and that server fails, workloads are stalled. This can be mitigated by replicating processing and data on another server in the cloud or a data center. Because this is using more than one server, even if it is just for system recovery and failover, the argument could be made that this is technically a scale-out.

Scale up is not a viable strategy when an organization’s operations are distributed—for example, when internet of things (IoT) and robotics are being used in remote manufacturing plant networks, or when server support is needed for separate point of sales operations in retail stores.

Scaling out takes a different approach entirely to increasing processing power. Scaling out means moving some of the organization’s workloads off the single existing server and onto several different servers to distribute the diverse workloads, or scaling horizontally.

This approach provides the needed processing and memory capabilities with room to grow without having to repeatedly upgrade memory and processing on the single existing server to meet increased demands. In addition, each new server can be upgraded with additional memory and processing if needed, or workloads can be expanded through the creation of multiple virtual operating systems on each server

Scaling out computing capability by distributing workloads across multiple servers instead of just upgrading a single server began as something called distributed processing. Distributed processing (and distributed business operations) have expanded to include remote plants and offices that operate independently of each other, for example, or field-based employees working from home.

For today’s businesses, it’s almost a necessity to install separate servers and networks in remote facilities to prevent the latency caused by centralizing real-time operations and to avoid the expense of high throughput, high-bandwidth data pipelines.

Scale out is also a preferred method for supporting disaster recovery and business continuity; when transactions are written to two machines at once, failover from one to the other is easy. In addition, some applications cross multiple clouds and on-premises computers—such scenarios require speeding application workloads across multiple computers and processors.

The primary disadvantage of scaling out is complexity. Troubleshooting can be more difficult because it involves analyzing run logs across multiple servers to locate and resolve issues.

Ownership is another concern—as workloads get distributed across multiple sites and clouds, different parties might have accountability. IT might maintain some of the tech, vendors might maintain others, and some might even be maintained by end user departments. It takes longer to resolve performance issues when multiple players are involved.

Scaling out can also increase computing costs and security risks. More computing resources and power are being consumed, and there are more entry point targets that can be exploited.

The way businesses use data is changing, and enterprises seeking to keep pace with the demands of collecting, storing, and processing huge volumes of data need hardware that’s up to the task. Many of them will find themselves deciding whether to upgrade existing servers—scale up—or expand with additional servers, or scale out.

As more processing moves toward the edge and outside of enterprises, a scale out approach makes sense. Even so, processing workloads will increase at the edge, and it is likely that they’ll also need to scale up resources for computers in distributed locations. As central data center processing needs grow in parallel, many companies have enterprise resource planning (ERP) systems and large databases that they want to keep on a single mainframe or server for processing and security.

When comparing features and limitations of scaling up against those of scaling out, most businesses will come to the same conclusion—the two are not mutually exclusive. In fact, most companies will use both approaches, as they provide more options and a better expansion path for future growth when done in tandem. The question is not scale up vs. scale out, but how to incorporate both means of expansion to best position the organization for future growth.

Read 5 Types of Enterprise Data Storage to learn more about how businesses are approaching the demands of retaining all the information they need to power their day-to-day work.

Mary E. Shacklett is a contributing writer to Enterprise Storage Forum and Datamation. She's also president of Transworld Data, and as an IT consultant and analyst has covered every aspect of IT with more than 1,000 published articles. She has a B.S. degree in Comparative Literature and Education from the University of Wisconsin, an M.A. degree in American Studies from the University of Southern California, and a JD degree from William Howard Taft University in Orange County, CA. In her spare time, Mary writes fiction, plays jazz, and manages a 75-acre forest.

Enterprise Storage Forum offers practical information on data storage and protection from several different perspectives: hardware, software, on-premises services and cloud services. It also includes storage security and deep looks into various storage technologies, including object storage and modern parallel file systems. ESF is an ideal website for enterprise storage admins, CTOs and storage architects to reference in order to stay informed about the latest products, services and trends in the storage industry.

Property of TechnologyAdvice. © 2026 TechnologyAdvice. All Rights Reserved

Advertiser Disclosure: Some of the products that appear on this site are from companies from which TechnologyAdvice receives compensation. This compensation may impact how and where products appear on this site including, for example, the order in which they appear. TechnologyAdvice does not include all companies or all types of products available in the marketplace.