DR Sites ensure operations continue regardless of a disaster. Explore site differences. location importance and cost. Click here now.

Also see: Disaster Recovery Planning

A Disaster Recovery (DR) site is a physical location separate from a company’s primary headquarters. Its purpose is to keep the organizational systems running in the event of a power outage, cyberattack, network failure, natural disaster, unexpected downtime, sabotage or other event that takes down the primary location.

Depending on the type of DR site, and what DR services they’re using, the facility might be online immediately, or there might be a short or long delay. As well discuss below, the type of DR site selected depends on the needs and financial resources of the organization.

DR sites ensure that operations can continue regardless of any mishap or disaster. They can also function as a means of replicating data from the main site to ensure minimal data loss. A DR site greatly reduces risk to the organization and eliminates the possibility of a devastating data loss incident or a period of downtime that could cripple the organization.

For mission-critical operations, Recovery Point Objectives (RPOs) would typically be less than 15 minutes, and Recovery Time Objectives (RTOs) less than an hour. In other words, in the event of an incident, the disaster recovery site would be operational within an hour, or would lose less than 15 minutes of data.

In the current disaster scenario, cyberattacks and ransomware have become a realistic new threat resulting in significant outages. The modern DR site, therefore, must cater to that possibility and ensure the logical separation of production and recovery from a network access standpoint, while having multiple snapshots of data to enable cyber recovery. It is also key to guarantee that the recovery technology includes automation and orchestration to deliver minimal downtime and aggressive RTOs.

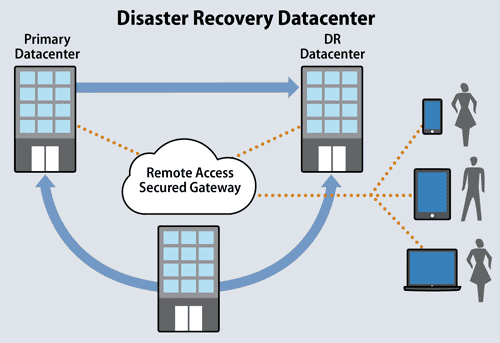

A disaster recovery site use a remote access secured gateway as a bridge between the primary and DR back up site.

The three types of DR Sites are hot sites, cold sites, and warm sites. The choice of what type of DR site boils down to the desired RTOs and RPOs i.e., how much downtime and data loss is acceptable – or affordable.

This is a location where the target environment is already up and running and can be immediately activated by a failover. There are also high availability architecture options where you can have multiple nodes in a clustering or load balancing setup. In those cases, an outage of a single node does not impact availability. Essentially, you would have two or more instances of your production environment activated, and therefore would incur significant costs. Anyone operating a hot site, then, is multiplying IT costs by two or more times.

This is a target DR environment that needs to be activated once a recovery process is initiated. Infrastructure exists but needs to be started up and fully launched. This environment might sometimes be used for dev/test and can be repurposed for DR at a time of need. As a result, costs are much lower for a cold site.

In between a hot site and cold site, a warm site is where you may have compute available on standby that can be easily connected to your recovered (or already replicated) data. Costs range in between a hot and cold site.

Furthermore, other elements may come into play. For instance, the type of data protection strategy. That is, backups that are compressed data and that need to be hydrated versus already-available data in replicated data that can simply be attached/mounted to your compute. And recovery automation/orchestration software can impact the speed and cost of recovery.

Mission critical systems typically require hot sites with high availability architectures and have near zero RTOs/RPOs. Business-critical systems, though, increasingly use DR as a Service (DRaaS) cloud recovery technologies that have replicated data and orchestrated recovery with RPOs of less than 15 minutes and RTOs of less than 1 hour. Less important systems can rely on cold site architectures with backup-based protection, typically offering RPOs of around 24 hours and RTOs of days.

“Costs and features vary based on what you need for RTO and RPO,” said Greg Schulz, an analyst at StorageIO Group. “Also keep in mind what you need (and can afford) for your apps and data. Look beyond cost and consider the value and business benefit of having apps and data available, accessible and usable.”

Bottom line: the better the RTO and RPO, the higher costs will rise. In some organizations, prioritization is used to reduce costs. Certain core applications and functions are allocated high RTO/RPO, whereas routine functions have slower recovery periods.

It’s no good to have a DR site in the basement or across the street. The event that strikes the primary location is likely to impact the secondary location, too. A best practice, therefore, is to position it at a distance of more than 30 miles. But some consider 30 miles as too little.

“You really need at least 200 miles between sites, preferably on separate power grids and with separate and redundant network access,” said Chris VanWagoner, Chief Strategy Officer, Commvault.

The location of a disaster recovery site is decided with reference to factors such as:

If the main site, for example, may be subject to flooding or earthquakes, the DR site should be situated somewhere that won’t suffer these same problems. Similarly, a DR site should belong to a different part of the power grid and be on the network of another carrier. Otherwise, it would be susceptible to the same failures inflicting downtime on the primary location.

A DR site should be sized appropriately to handle the expected workload. An organization’s primary IT systems are designed to meet the needs of the day to day business activities. If the DR/BC plan must ensure full operational capabilities for the entire organization, the DR site needs to be sized and outfitted correctly. Often, however, financial considerations enter in.

Many DR sites are sized for minimal functionality. They have just enough to keep crucial systems running but would break under the stress of trying to support every one of the day-to-day business operations.

Ownership, too, can vary. Sometimes a DR site will be self-owned, sometimes hosted by another company. There are also co-location facilities that look after the DR needs of multiple organizations. In whatever way the disaster recovery site operates, its function is to recover rapidly, offer failover capabilities and enable the organization to resume processing.

“Having your own facility vs. using somebody else’s co-location, hosted or cloud comes down to financial, security and control considerations,” said Schulz.

Some organizations have the financial means, the personnel resources and/or a regulatory requirement to operate their own disaster recovery site. These internal sites are generally expensive, but they can be justified in some businesses by the much higher potential losses that could be incurred due to downtime.

In some financial organizations, for example, a day’s downtime can add up to the annual costs of maintaining their own hot site. There are also some industries were an internally managed DR site is mandated by compliance regulations.

For most organizations, though, external sites remain the best option. It is often far cheaper to offload DR functions to a specialist provider of data center services, a cloud provider, or a colocation provider. For some, the internal resources aren’t there to run an internally operated site. External sites can either be full service, partial service, or are just rented locations that house equipment that can be plugged in by the organization in the event of a disaster.

Increasingly, cloud storage can provide a scalable and cost-effective approach to DR. Since the cloud consists of many geographically dispersed physical locations, it’s possible for some to use these qualities to achieve an effective DR site plan at reduced costs.

Those companies choosing this route, though, are warned to pay close attention to application compatibility. “The cloud cannot run everything that’s in today’s data center such as mainframe, and certain applications,” said VanWagoner.

Enterprise Storage Forum offers practical information on data storage and protection from several different perspectives: hardware, software, on-premises services and cloud services. It also includes storage security and deep looks into various storage technologies, including object storage and modern parallel file systems. ESF is an ideal website for enterprise storage admins, CTOs and storage architects to reference in order to stay informed about the latest products, services and trends in the storage industry.

Property of TechnologyAdvice. © 2026 TechnologyAdvice. All Rights Reserved

Advertiser Disclosure: Some of the products that appear on this site are from companies from which TechnologyAdvice receives compensation. This compensation may impact how and where products appear on this site including, for example, the order in which they appear. TechnologyAdvice does not include all companies or all types of products available in the marketplace.